Your Attempt To Solve Debate Will Not Work

...

As a blogger, I hear about lots of projects to “solve debate”, or “disagree better”, or “map arguments”. Often these are ACX grant applications. I always turn them down. They’re well-intentioned, sophisticated, and doomed.

I appreciate that Internet arguments usually don’t go well, that there are lots of ways to improve them, and that this is a worthy cause. But I’ve also seen a dozen projects of this sort fail. Here’s why I think yours will too:

“Debate” almost never corresponds to mappable arguments. The simplest “solve debate” proposal is the argument map. Some technology helps people decompose arguments into premises and conclusions, then lets skeptics point out where the premises are wrong, or where the conclusion doesn’t follow from the premise.

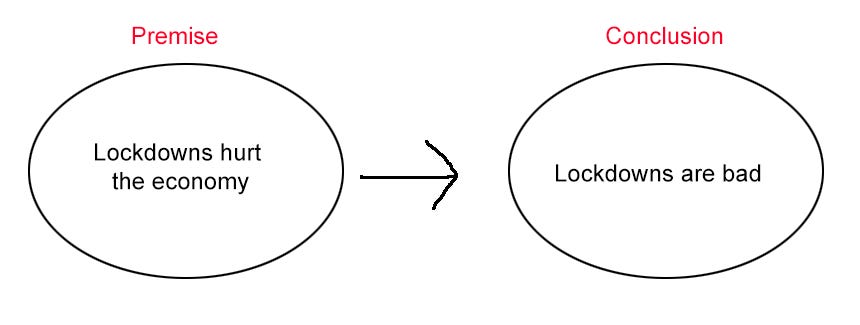

But almost no real argument works that way. Even in the best-case scenario, where an argument almost works that way, it doesn’t really work that way. Suppose you’re having an argument about COVID lockdowns. Someone says “lockdowns hurt the economy”. Now you’re stuck in a giant fight about whether that claim is true (answer: compared to the counterfactual, certain kinds of lockdown measures hurt certain economic indicators in certain situations). But even if it is true, so what? What conclusion can you draw from that premise? Through the traditional argument-mapping structure, none at all. You can’t draw the conclusion “lockdowns are bad” until you quantify the magnitude of the economic effect, the magnitude of all the other negative effects, the magnitude of whatever benefits you think lockdowns have, compare costs and benefits for some specific lockdown proposal, and have some theory of the Good or at least of interpersonal utility comparison. And even if you did all of that, someone could accuse you of missing the point if they thought that lockdowns were bad for civil rights reasons regardless of their costs vs. benefits, or if we’re obligated to consider the needs of the weakest among us more than the needs of the masses, or anything else. The point is that a set of little circles like so:

…isn’t just wrong, but wrong in such a fundamental, utterly-doomed way that using it to structure your thinking will make things a million times worse.

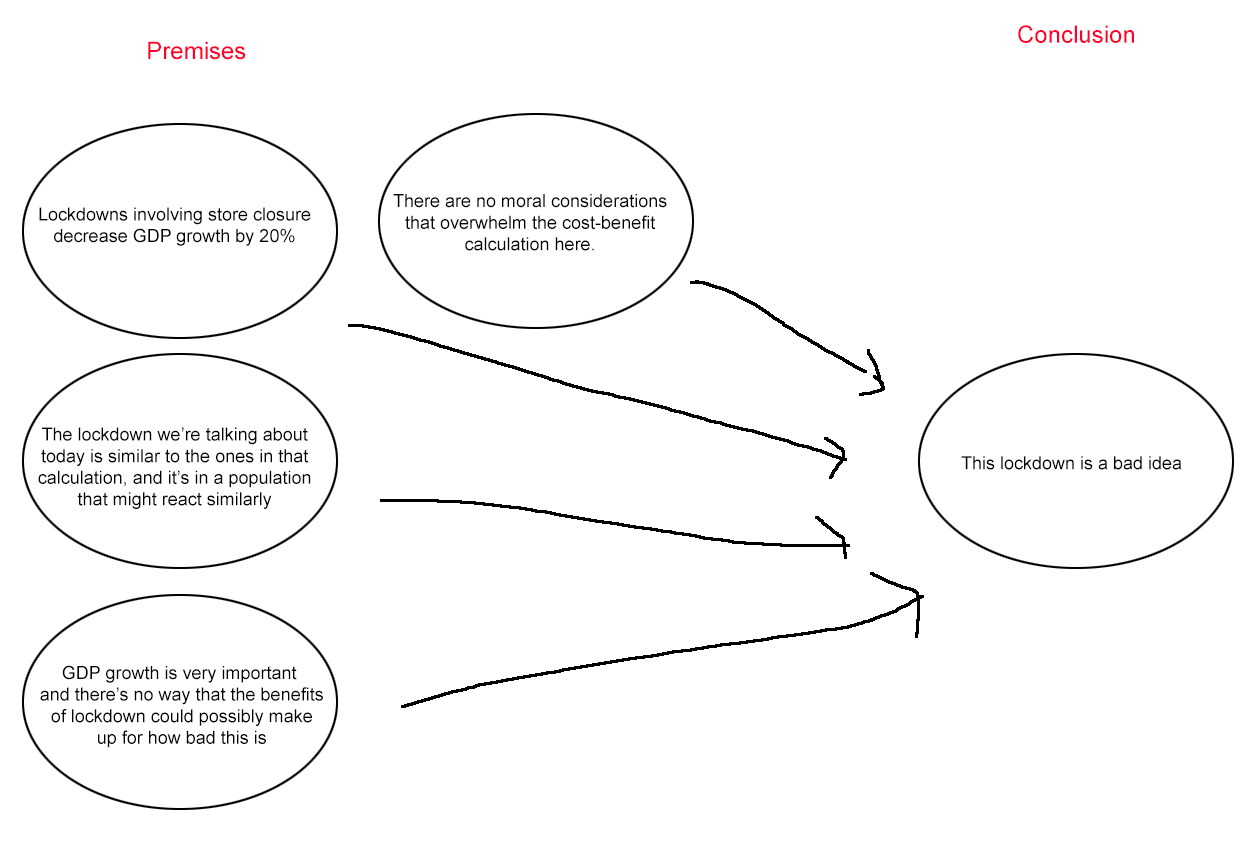

Can you solve this by increasing the number of circles?

This is better, but I think still misleads rather than helps - shouldn’t “this lockdown is a bad idea” take a probability as type? Doesn’t pretending it’s a logically necessary Aristotelian syllogism just obfuscate that? Doesn’t it train you to point to one specific link and shout “argumentum ad verecundium, that’s a fallacy, you lose!”, whereas real life is almost never that simple? Once you have enough of these circles, aren’t you fighting the argument-mapping idea rather than benefiting from it?

I don’t know, maybe some people with poor working memory who really hate holding an entire argument in their head might benefit from this kind of thing. I think for everyone else it just makes things more complicated.

Arguments rarely hinge on one person being simply wrong and stupid: A staple of this genre is something like “map out the specific facts people are relying on, then figure out which of those facts are false”. Or “map out the specific logical steps people are taking, then figure out which of those steps is a fallacy”.

But arguments rarely hinge on false facts. Even an insane person like Alex Jones rarely says specific false facts. In the few cases where there are specific false facts, most people, when forced, are happy to jettison the false fact and continue making the argument on other grounds.

And arguments rarely hinge on specific named fallacies. Even when they do, those fallacies often provide some kind of useful information (it’s technically ad hominem fallacy to say that Alex Jones is wrong about Sandy Hook because he’s a lying loon, but I actually know very little about his Sandy-Hook-related arguments, and I think relying on his general mendacity and looniness is a useful proxy here).

Last year, I was in a panel discussion of why people disagree about AI risk. The closest we came to an answer was that some people place a lot of weight on a theoretical argument for intelligence explosion, and other people don’t really trust theoretical arguments and stick to a prior of “things rarely change very rapidly”. Nobody here is using a false fact or committing a fallacy - they’re just weighing theoretical and empirical evidence differently.

The hardest problem for any social technology is getting users: We’ve talked about this before for dating apps. And if it’s that hard to lure people in with the promise of sex, it’s probably even harder to lure them in with the promise of logical accuracy.

Like dating, arguments require two compatible people. That means your app is useless until it has a big enough population that, whenever someone wants to argue, there will be a compatible person willing to take the other side of that argument in a reasonable amount of time. That’s hard to bootstrap.

You might think: “But don’t people like arguing on the Internet?” No. Look closer. People like taking drive-by potshots on the Internet - retweeting some link that makes them feel like they’ve successfully embarrassed their ideological enemies. Other people get angry and tell them their link is stupid and they should be embarrassed instead. An argument might happen. But nobody comes into the process intending to have an argument, any more than the Great Powers of Europe intended to have World War I. The Great Powers just wanted to avenge some slights, take some easy-looking opportunities, and avoid looking weak. Just so with arguments.

Even I don’t like arguing on the Internet. I like writing a post saying that my opinion is right. Sometimes people tell me that actually, my opinion is wrong. This frustrates me. I feel obligated to respond, but the response is an unfortunate step between me voicing my opinion and everybody agreeing that my opinion is right. I am not looking for the opportunity to do this in a more finicky way, at much greater length, unless this would serve some need of mine: maybe convincing other people more efficiently. But formal argument mapping is an inefficient way of convincing people.

That means that these apps’ target demographic - people who want to argue on the Internet, but are looking for a better way to do it - doesn’t really exist.

This hasn’t worked in two thousand years of arguing: Most dating apps are doomed. But one reason for optimism about dating apps is that people have made them before. They’ve been known to occasionally work. And they’re an extension of things like matchmaking resumes and classified ads which have worked for centuries. What’s the argumentative equivalent?

Maybe there have been slight improvements in arguing since Socrates’ time. It’s probably good to know some formal logic. We’ve collected some decent fallacies, like “correlation is not causation”, and a good toolbox of heuristics around evaluating evidence (eg RCTs > correlational studies). But in terms of the actual mechanics of arguing, has any app or institution like this really caught on? The closest thing is r/changemyview, which is just a community of people trying to argue, not any kind of mechanical change to the argument format.

I won’t be approving grants to these kinds of projects, or otherwise getting interested in them, unless someone has an incredible idea to address these issues.